Law Firms Using AI: Policies That Prevent Bad Outputs

If your firm is experimenting with generative AI, you are not alone. But the real differentiator for law firms using AI is not who has the newest tool, it is who has the policies and controls that prevent bad outputs from reaching a client, a carrier, or a court.

Bad outputs usually fall into a few buckets: hallucinated citations, wrong medical facts, missing exhibits, confidentiality leaks, and overconfident analysis presented as certainty. The good news is that most of this risk is manageable with clear rules, lightweight QA, and good records.

Why “bad outputs” are a policy problem (not just a tool problem)

Even strong AI systems can produce plausible language that is factually wrong. Courts have already sanctioned lawyers for filing AI-generated case citations that did not exist (for example, Mata v. Avianca, 2023). That case is now a standard cautionary tale for a reason: the failure was not “AI,” it was the absence of a verification process.

Two widely cited frameworks point in the same direction:

- The ABA Formal Opinion 512 (2024) emphasizes competence, confidentiality, and supervision when using generative AI.

- The NIST AI Risk Management Framework (AI RMF 1.0) recommends governance, documentation, and ongoing measurement for AI risk.

In practice, your “AI policy” should function like a mini quality-management system: define allowed use, define review steps, define what must be documented.

The policy stack that prevents bad AI outputs

1) An “allowed uses” matrix (what AI can draft vs what AI can decide)

Start with a simple rule: AI can draft, summarize, and outline, but it cannot be the final authority on legal conclusions or factual assertions.

A workable allowed-uses matrix usually includes:

- Green (low risk): formatting, tone edits, initial issue spotting, deposition topic lists, chronology extraction.

- Yellow (medium risk): demand letter drafts, medical summaries, discovery responses, settlement narratives.

- Red (high risk): final citation statements, jurisdiction-specific legal conclusions, anything filed without attorney verification.

This helps associates and staff move fast without guessing where the line is.

2) A mandatory human review standard with a “verification checklist”

Most AI failures become dangerous when the reviewer only checks whether the writing sounds good.

Adopt a minimum verification checklist for any client-facing or litigation-ready output:

- Citations: every case, statute, regulation, and quote is checked in a primary source database.

- Record facts: every date, diagnosis, provider, and dollar amount is traced to a document page.

- Scope: the output matches the requested jurisdiction, time period, and document set.

- Completeness: exhibits and key records are not silently omitted.

Keep it short. Consistency beats complexity.

3) A source-grounding rule (no “freehand” facts)

Require that summaries and analytics be grounded in the uploaded record, not general assumptions.

Practically, the policy can say:

- Any medical or liability statement must be tied to a record cite (Bates range, page/line, or document name and page).

- If the system cannot support a statement with a cite, it must label it as “unverified” or omit it.

This single rule prevents a large share of embarrassing errors.

4) A confidentiality and data-handling policy (especially for PHI and sensitive facts)

For personal injury and medical records, confidentiality is not optional and neither is clarity.

Your policy should specify:

- Which categories of data are allowed in AI workflows (PHI, minors, sealed records, trade secrets).

- How data is stored and who can access it.

- Whether prompts/outputs are retained, and for how long.

This aligns with the ABA’s confidentiality emphasis in Formal Opinion 512 and helps with client and carrier expectations.

5) A “no blind copy-paste” rule for filings

Make this explicit: no AI-generated text goes into a pleading, motion, brief, or discovery response without attorney sign-off and citation verification.

This is not anti-innovation. It is the same supervision principle firms already apply to junior drafts.

6) A logging and audit trail requirement

When something goes wrong, you need to answer basic questions quickly: what documents were used, what prompt was used, what version of the draft was approved, and who approved it.

Set a minimum standard for what gets saved:

- Document set identifier (matter name, upload batch, date)

- Draft type (demand letter, medical summary, depo outline)

- Reviewer name and approval date

Even lightweight logs materially improve defensibility.

Minimum controls, in one table

| Policy area | Common bad output it prevents | Minimum control | Evidence to keep |

|---|---|---|---|

| Allowed uses | Overreliance on AI for conclusions | Green/yellow/red use matrix | Published internal policy |

| Human verification | Fake citations, wrong record facts | Citation and record checklist | Reviewer initials, dated version |

| Source grounding | Unsupported medical and damages claims | Require record cites for key statements | Output with cites, Bates references |

| Confidentiality | Data leakage, improper sharing | Access controls, retention rules | Access logs, retention schedule |

| Filing safeguards | Court sanctions, credibility loss | No blind copy-paste into filings | Review notes, final approval record |

| Audit trail | Inability to investigate mistakes | Prompt/output logging standard | Matter log entries |

Where TrialBase AI fits (without changing your ethics rules)

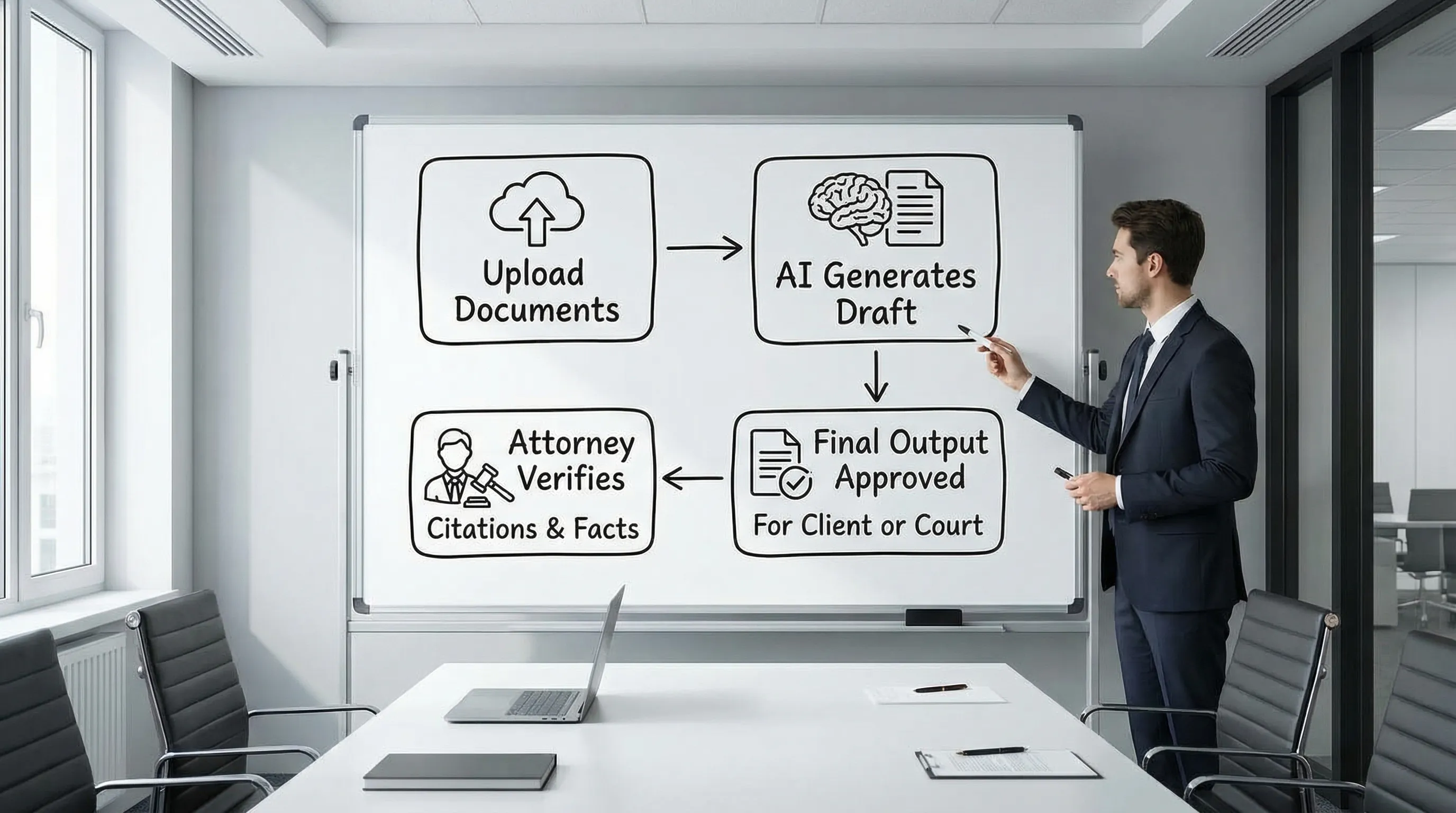

Policies are only useful if they are easy to follow. Litigation support platforms can help by turning the policy into a repeatable workflow: upload, analyze, generate, review, and export.

TrialBase AI is designed for litigation teams that need case-ready outputs in minutes, such as demand letters, medical summaries, and deposition outlines, while keeping the work product inside a unified workflow. Whatever tool you choose, the key is that it supports your review standards, your confidentiality obligations, and your record-keeping.

Frequently Asked Questions

Do law firms need an AI policy if only one lawyer uses AI? Yes. Even a one-page policy reduces risk because it standardizes verification, confidentiality, and what can be used in filings.

What is the fastest way to prevent hallucinated citations? Require a hard rule: no citation goes out the door unless it is verified in a primary source database, and document that verification.

Should we ban AI from drafting demand letters? Not necessarily. Many firms allow AI drafts, but require human review, record grounding for facts and damages, and careful tone control.

What should we document for defensibility? At minimum: the document set used, the output type, the reviewer, the date, and the final approved version.

CTA: Make your AI workflow match your standards

If your firm is serious about using AI without risking bad outputs, the next step is to pair clear policies with tooling that supports repeatable review.

Explore how TrialBase AI helps litigation teams turn uploaded documents into demand letters, medical summaries, deposition outlines, and more, delivered in minutes, so you can move faster while keeping attorney oversight where it belongs.

.png)