AI Legal Services: Use Cases That Hold Up Under Scrutiny

AI can draft a memo in seconds, but litigation is not a demo. Your work product has to survive internal review, client expectations, opposing counsel, and sometimes a judge who will not tolerate “the model said so.” The safest way to adopt AI legal services is to focus on use cases where inputs are bounded, outputs are verifiable, and the human lawyer’s judgment stays in control.

Below are practical, litigation-forward use cases that can hold up under scrutiny, plus the guardrails that make them defensible.

What “holds up under scrutiny” actually means

In litigation, scrutiny is usually about four things:

- Accuracy and traceability: Can you point to the record that supports each key statement?

- Confidentiality and privilege: Is data handled in a way that preserves privilege and complies with your obligations?

- Competence and supervision: Are lawyers reviewing AI output consistent with professional duties (see ABA Model Rule 1.1 and Rule 5.3)?

- Repeatability: Could a teammate reproduce the result using the same document set and prompt assumptions?

A helpful mental model is the “risk management” approach used in the NIST AI Risk Management Framework: define the use, measure the risk, manage it with controls, and document your process.

Use cases for AI legal services that are easiest to defend

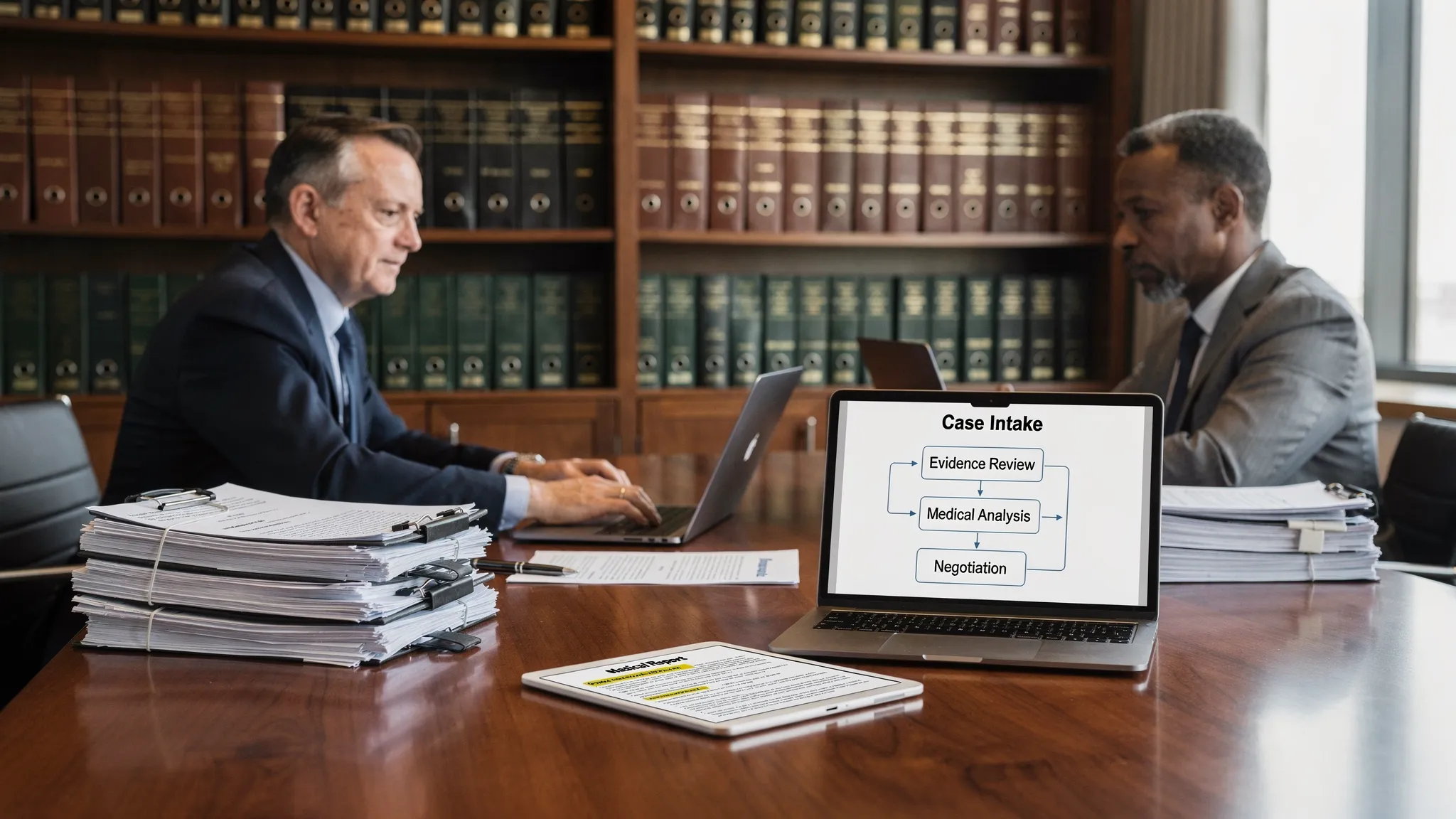

1) Case intake triage and issue spotting (bounded, high leverage)

Best for: plaintiff and defense teams who receive messy intakes, incident reports, photos, and early medical records.

Why it holds up: Intake triage is primarily an internal workflow. You are not filing the AI output; you are using it to organize and prioritize.

What “good” looks like:

- AI extracts basic facts into a structured format (dates, parties, providers, injuries, policies).

- You treat it as a starting point, then confirm against the source docs.

- You preserve a clean distinction between “extracted from record” and “attorney assessment.”

2) Medical record summaries with citations to the page/visit

Best for: PI, med mal, product liability, workers’ comp, and any case where medical chronology drives value.

Why it holds up: Medical summaries are defensible when they are document-grounded and easily auditable. The standard you want is “show me where that came from.”

Operational guardrail: Require every material statement (diagnosis, imaging result, restrictions, causation language) to map to a source location. If a tool cannot keep you close to the underlying record, it is harder to trust under time pressure.

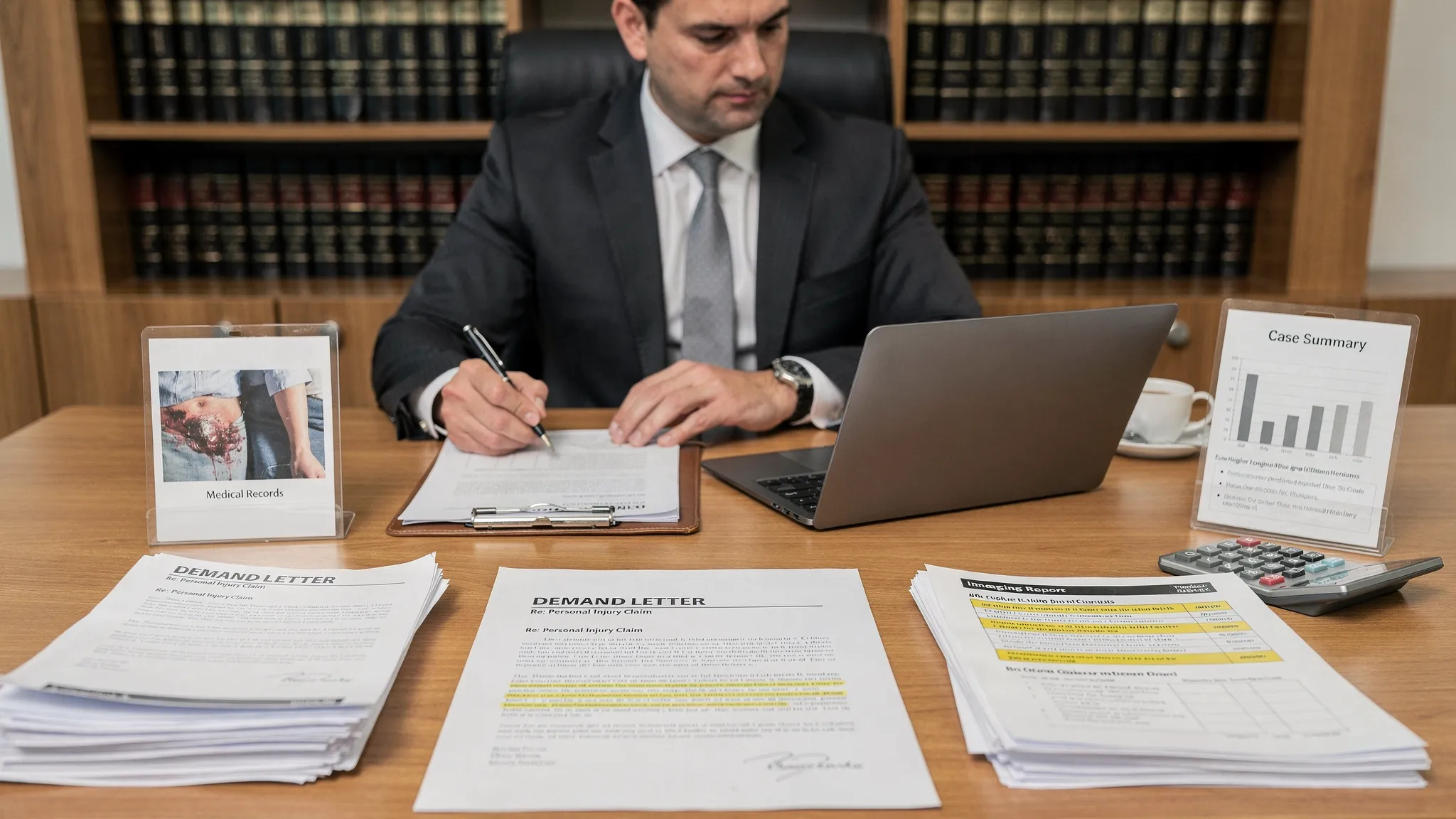

3) Demand letters that are record-driven, lawyer-edited

Best for: pre-suit resolution and early settlement posture.

Why it holds up: Demand letters are persuasive writing based on known categories: liability theory, damages narrative, treatment timeline, specials, and a settlement ask. AI is strong at producing a first draft, while the attorney must own the final strategy and tone.

How to keep it defensible:

- Lock the demand’s factual section to the verified medical chronology and incident facts.

- Add a final attorney pass for venue-specific phrasing, attachments, and compliance.

- Avoid letting the model “estimate” numbers or invent future care. If it is not in your file or your expert plan, it does not belong.

If your workflow includes tools like TrialBase AI, this is a natural fit: you upload documents and generate a draft demand letter, then edit and finalize as counsel.

4) Deposition outlines that track pleadings, records, and themes

Best for: preparing partner-ready outlines quickly, especially with multiple witnesses.

Why it holds up: A deposition outline is inherently a planning document. It is defensible when it is tethered to the pleadings, discovery responses, and key records.

Practical approach:

- Use AI to propose topic blocks (background, timeline, prior conditions, mechanism, mitigation).

- Insert exhibit references from your file.

- Add “goal questions” that reflect the elements you must prove (or defeat).

Tools that generate deposition outlines from your uploaded record can save time, but your outline still needs an attorney’s sequencing, impeachment plan, and exhibit strategy.

5) Discovery simplification: document grouping, summaries, and timelines

Best for: small-to-mid teams who need fast orientation in large productions.

Why it holds up: The defensible version of AI in discovery is not “decide relevance for me.” It is reduce reading load while keeping the lawyer in charge of determinations.

Examples that tend to be safe and useful:

- Generate matter timelines from a defined set of documents.

- Summarize long PDFs into issue-tagged briefs.

- Create a “what we have, what we are missing” checklist aligned to claims and defenses.

For legal standards around discovery obligations, keep your process consistent with procedural rules and preservation duties (see Federal Rules of Civil Procedure, Rule 26 and related discovery rules).

6) Trial prep materials: chronologies, exhibit callouts, and theme lists

Best for: narrowing a case to the cleanest story.

Why it holds up: Trial materials are defensible when they are treated as work product drafts that undergo attorney verification, not as final truth.

AI can help by:

- Producing draft chronologies and witness-by-issue maps.

- Surfacing contradictions and gaps for follow-up.

- Generating trial-ready summaries that you then conform to your exhibit list.

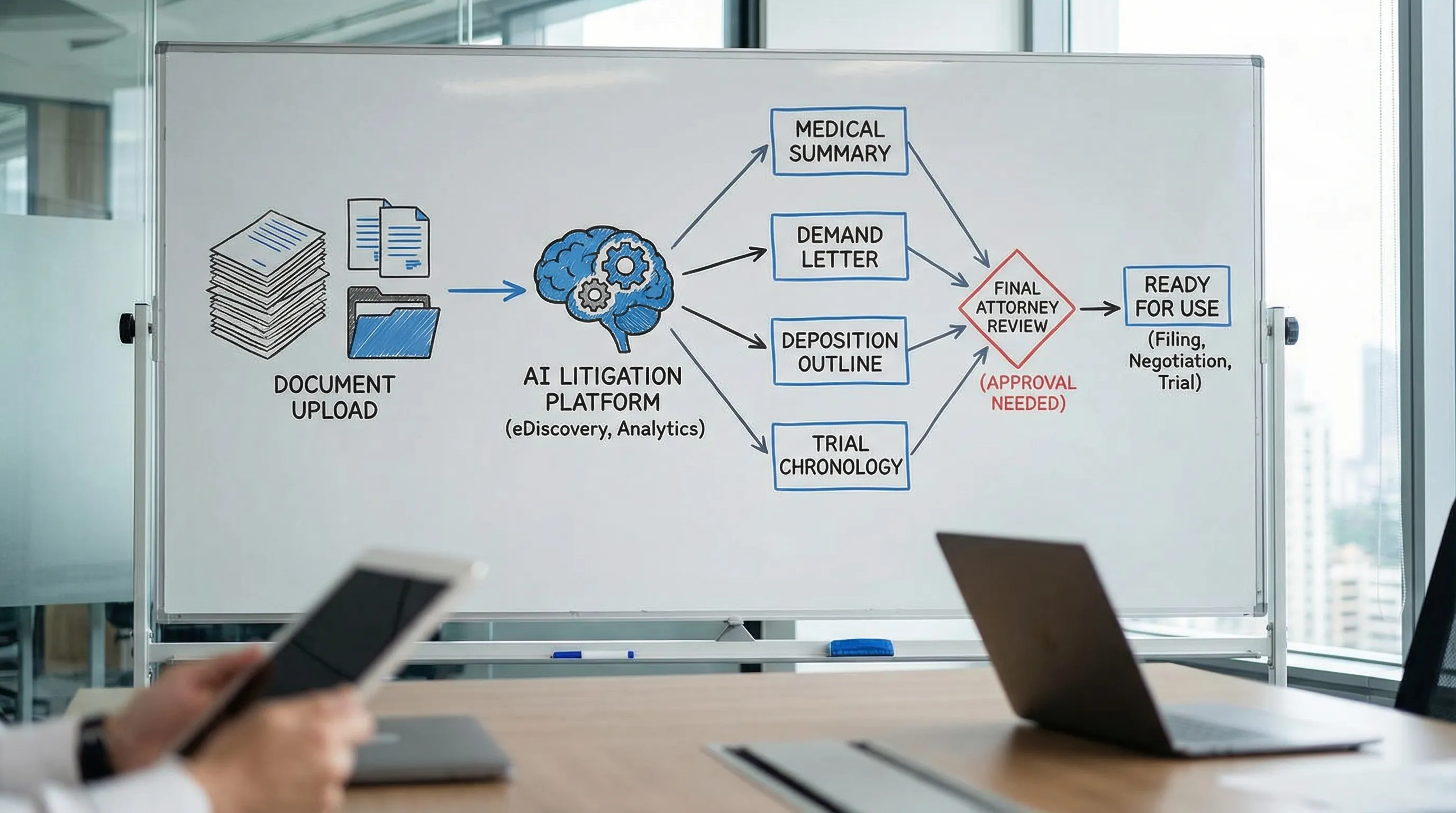

Platforms positioned as “intake to verdict” litigation support (including TrialBase AI) typically focus here: turning uploaded documents into litigation-ready drafts like medical summaries and trial materials in minutes, inside a unified workflow.

A simple defensibility checklist you can adopt tomorrow

To make AI legal services hold up under scrutiny, standardize the same controls across use cases:

- Defined input set: Specify which documents are “in scope” (and which are excluded).

- Citation expectation: For any factual claim that matters, require a pointer back to the record.

- Human-in-the-loop signoff: One accountable reviewer owns the final version.

- Versioning: Save drafts and final outputs so your team can reconstruct how the work product was created.

- Confidentiality review: Confirm how your vendor handles data, retention, and access, and align it with your firm’s policies.

Quick reference table: what’s defensible, and why

| Use case | Typical inputs | Output you can defend | What makes it hold up under scrutiny |

|---|---|---|---|

| Intake triage | Intake notes, incident report, early records | Structured fact summary, issue list | Internal-facing, easy to validate, reduces missed facts |

| Medical summary | Treatment notes, imaging, billing | Chronology with key events | Traceable to record, lawyer verifies key assertions |

| Demand letter draft | Verified facts, medical chronology | Persuasive draft letter | Bounded format, attorney edits, no invented damages |

| Deposition outline | Pleadings, records, discovery answers | Topic blocks and questions | Planning tool, tethered to exhibits and elements |

| Discovery simplification | Productions, PDFs, correspondence | Timelines, doc summaries | Reduces reading load, lawyer keeps relevance decisions |

| Trial prep drafts | Core exhibits, witness info | Chronologies, witness-by-issue maps | Draft work product, validated against exhibit list |

Where teams get burned (and how to avoid it)

Problems usually come from the same three mistakes:

- Letting AI create “new facts.” Fix this by requiring citations or keeping outputs explicitly labeled as hypotheses to verify.

- Using AI for final legal conclusions without review. Use AI to accelerate drafting and organization, then have a lawyer apply judgment and finalize.

- Treating vendor tools as a black box. Ask direct questions about data handling, access controls, and whether your content is used to train models. If you cannot get clear answers, you cannot defend the workflow.

Putting it into practice with TrialBase AI

If you want AI legal services that translate directly into litigation work product, focus on workflows that convert your uploaded documents into tangible deliverables: demand letters, medical summaries, deposition outlines, and trial materials. That is the category TrialBase AI is built for.

The adoption path that tends to work best is narrow and measurable: pick one use case (often medical summaries or demand letters), define your review standard (citations, signoff, versioning), run a short pilot with real files, then expand.

If you keep the work grounded in the record and maintain attorney control over the final output, AI can save time without creating credibility risk.

.png)